Politics & Game Theory

When Cooperation Works and When It Doesn't

This is The Curious Mind, by Álvaro Muñiz: a newsletter where you will learn about technical topics in an easy way, from decision-making to personal finance.

Game theory reveals a disturbing truth: rational self-interest can lead us to collectively terrible outcomes.

In the Prisoner's Dilemma, two criminals acting "optimally" for themselves both end up worse off than if they'd cooperated. This isn't just an academic puzzle: it explains why nations struggle to collaborate on climate change, why arms races spiral out of control, and why trust is so fragile in international politics. The mathematics are clear: when you can't trust your opponent, betrayal becomes rational, even when mutual cooperation would serve everyone better.

But there's hope: game theory also shows us exactly when and how cooperation can win.

The Prisoner’s Dilemma

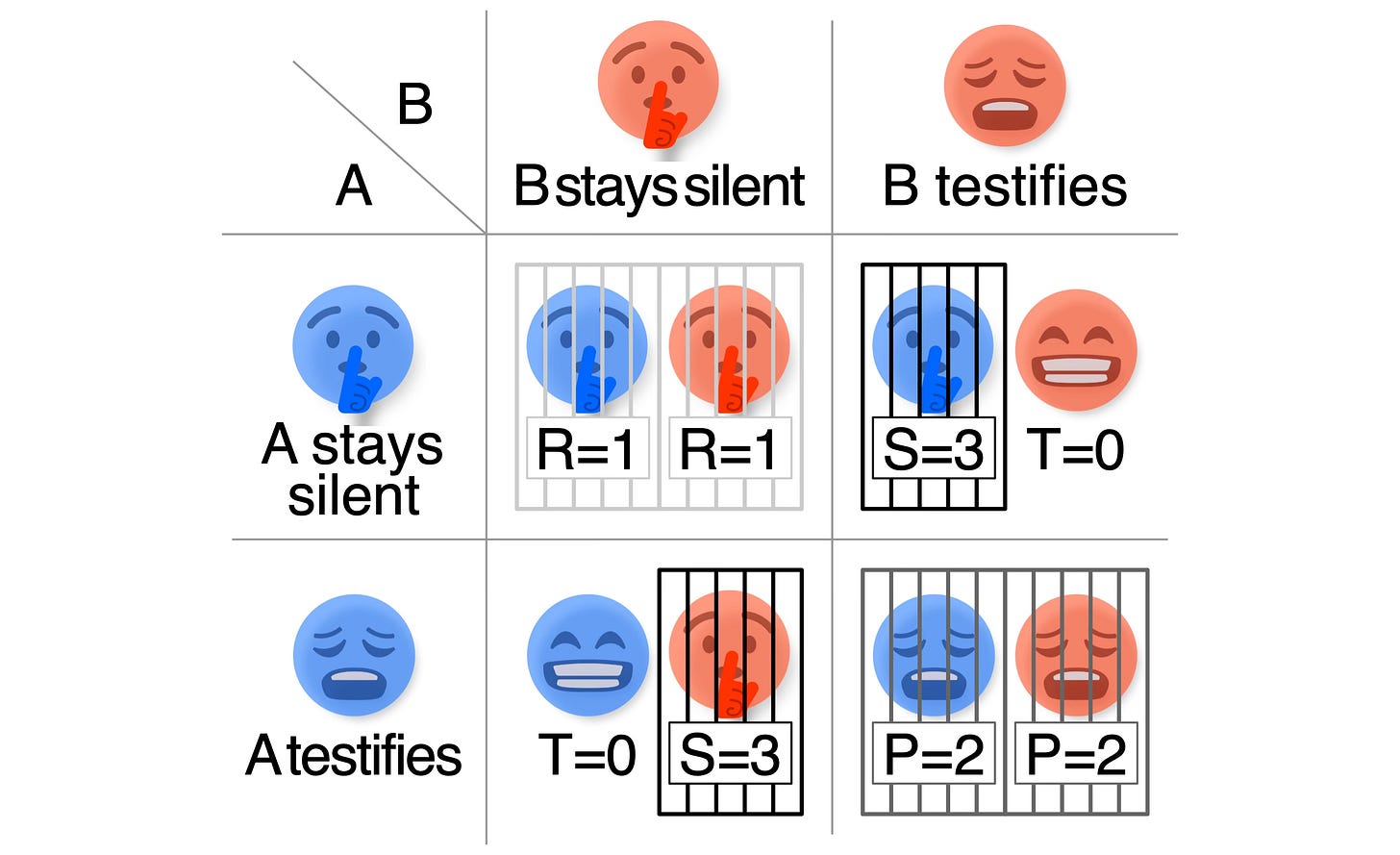

In one of our first posts, we used the Prisoner’s Dilemma to introduce Nash equilibria—a central concept in game theory. Here’s a recap:

The Prisoners Dilemma

Two members of a criminal gang are arrested by the police. They are separated and can’t communicate with each other, and they have to go to court the next day.

The initial sentence is that both of them will be 1 year in prison. However, the police offer them individually the following deal: if one of them testifies against the other, they will not go to jail and, instead, the other will be sentenced to 3 years.

But, if both of them testify, the sentence of each will be 2 years instead of one.

What should they do to reduce their sentence as much as possible?

A Nash Equilibrium occurrs when, for any of the players, their strategy is optimal given the opponent’s one. In this game:

To be in a Nash Equilibrium, both players need to testify.1

Look at the outcome from this: both A and B get sentenced to 2 years. Had they cooperated and stayed silent, the outcome would’ve been better for both (each spending only 1 year in prison).

This is quite unsettling. Let me rephrase what is going on:

By not cooperating and looking after your own interest, you end up in a suboptimal situation.

From Prison Cells to Climate Change

This puzzle with prisoners and nice numbers has profound implications in the real world. Let me tweak the prisoner’s dilemma a bit and you’ll see why:

We don’t really need the two criminals to be separated and unable to communicate: as long as they don’t trust each other, the situation is effectively the same.

More general than “two criminals trying to reduce their sentence”, the dilemma appears in situations where two parties are trying to maximise some outcome, and their actions influence each other’s outcome (with a payoff with the same structure as the prisoner’s dilemma).

“Staying silent” becomes “cooperating”, and “testifying” becomes “defecting”.

Does this ring a bell? Here’s an example:

Climate Change

Two states A & B are facing the potentially catastrophic results of climate change.

If both take action to tackle the problem—they cooperate—the outcome is collectively the best: they each spend some money in a green transition, while growing on-par and reducing emissions together.

Net outcome: (-1, -1)

However, if A works to reduce emissions while B doesn’t, A is at a clear disadvantage: imagine cleaning a river while a factory is polluting it upstream. Not only are you cleaning with no effect, but the factory owner is getting richer while you collect his shit. Furthermore, state B is in a better position than when cooperating: state A is doing the job for them, and they don’t need to invest anything in a green transition.

Net outcome: (-3, 0)

Lastly, if neither of them takes action, they save money in the short term but will eventually face the consequences of climate change.

Net outcome: (-2, -2)

What should the states do to reduce harm as much as possible?

And here’s the sad truth:

Just as in the prisoners dilemma, if you cannot trust your opponent, the best strategy is not to cooperate.

Can Trust Break the Trap?

Clearly things are not so simple in socioeconomic affairs.

Some countries trust each other and cooperate, while others don’t. These “cooperation partners”–our allies–are built through history by trial and error. Crucially, allies are time-dependent: someone can cooperate for some time, until they don’t.

Game theory has studied these time-dependent cooperative networks, and how to adapt strategies accordingly. For example, suppose you face the Prisoner’s Dilemma multiple times (with the same opponent), the so-called iterated prisoners dilemma.

This changes things dramatically: you can see how your opponent acts (do they cooperate or not?) and adapt your subsequent actions accordingly. The game becomes less about a single decision and more about building a reputation.

How would you act in such a situation?

Why Always Defecting Fails in the Real World

Interestingly, the Nash equilibrium in the iterated Prisoner’s Dilemma is still obtained by always defecting (which can be proved by induction). In other words, the math still says: betray, betray, betray

However, just as in the one-shot Prisoner’s Dilemma, the Nash equilibrium is not the optimal outcome for either party. If you trust your opponent and believe in cooperation, the best is to cooperate.

How should you act in a world where your opponent might cooperate?

Tit-for-Tat

This strategy was developed by Anatol Borisovich Rapoport, a mathematical psychologist (apparently such a profession exists). It was the best-performing deterministic strategy for the iterated Prisoner’s Dilemma in early computer tournaments.

It can be described with two simple rules:

On the first round, always cooperate.

On subsequent rounds, mimic your opponent’s last action: cooperate if they cooperated, don’t if they didn’t.

In other words:

Aim to cooperate, but retaliate if needed.

You start by trusting someone, and keep trusting them as long as they cooperate. However, you are no fool: if they stop being your ally and defect, you retaliate.

Conclusion

Two things can be concluded from this discussion.

First, it’s encouraging that mathematics tells us that, if cooperation and trust can be established, this is optimal. Always try to cooperate.

Second, the harsh truth is that, against someone who (consistently) defects, one must retaliate. Keep being the nice guy and you’ll just be exploited like a fool. As Luis & Pieter Garicano wrote on Sixteen thoughts on Greenland:

Even if ‘cooperating’ is less painful in the short run, against an opponent inclined to defect, the optimal response is retaliation. It is better to do this sooner rather than later […].

The world isn't a one-shot game.

We interact repeatedly: in diplomacy, business, and daily life. Tit-for-Tat shows us that the sweet spot lies between naivety and cynicism: start with trust, but don't be exploited.

In a world of repeated interactions, kindness with accountability isn't just morally appealing—it's mathematically correct.

In Case You Missed It

Indeed, assume any other strategy, for instance that A testifies and B doesn’t. Then, conditioned on A testifying, B could improve their outcome by also testifying (reducing their sentence from 3 to 2 years). The same argument works if A doesn’t testify and B does. Lastly, if both of them stay silent, then A could improve their outcome by testifying, reducing their sentence from 1 to 0 years (and the same would apply to B).

So interesting! It's very cool to see how mathematic theories apply to absolutely everything. And it's also kind of sad to see that if most countries don't agree to cooperate, we are going to end up messing up our world 😓